CoreWeave has surged from its 2017 launch to become one of the most talked about specialized clouds for GPU computing. Built for the age of large scale AI and high fidelity graphics, it focuses on the performance and availability that modern models and media pipelines demand. Its rapid growth reflects a clear bet on accelerated compute as the backbone of today’s innovation.

The platform targets machine learning teams, generative AI startups, VFX and rendering studios, research labs, and enterprises that need reliable access to premium GPUs. CoreWeave is known for offering current generation NVIDIA hardware, low latency networking, and Kubernetes native orchestration that simplifies scaling production workloads. Flexible options, from on demand capacity to reservations, help teams balance speed, control, and cost.

Positioned as a focused alternative to general purpose clouds, CoreWeave emphasizes throughput, predictable performance, and fast start times. Developers appreciate its container first workflow, robust APIs, and integrations that fit modern MLOps and render pipelines. These strengths, paired with transparent pricing and attentive support, explain why CoreWeave is a leading name in GPU cloud infrastructure.

Key Criteria for Evaluating CoreWeave Competitors

Choosing an alternative to CoreWeave starts with understanding how each provider performs on cost, capacity, and operational fit. The right choice aligns GPU availability, networking, and tooling with your workload priorities. Use the following criteria to compare options with confidence.

- GPU availability and roadmap: Evaluate access to current generation GPUs, multi GPU configurations, and future hardware commitments. Consistent supply reduces queue times and keeps projects on schedule.

- Pricing and total cost: Compare on demand, reserved, and spot options, as well as egress, storage, and support fees. Transparent billing and predictable discounts help control unit economics.

- Performance and networking: Look for low latency fabrics, high bandwidth interconnects, and optimized storage paths. Training speed and inference throughput depend on these fundamentals.

- Scalability and orchestration: Assess Kubernetes support, autoscaling, job schedulers, and multi tenant isolation. Mature orchestration shortens deployment cycles and simplifies operations.

- Reliability and SLAs: Review uptime commitments, incident history, and redundancy tiers. Strong SLAs and clear remediation policies protect production workloads.

- Security and compliance: Confirm data encryption, access controls, and certifications such as SOC 2 and ISO 27001. Regional data residency and private networking can be critical.

- Developer experience and ecosystem: Check APIs, SDKs, Terraform modules, and integrations with MLOps tools. Good documentation and quick start templates speed onboarding.

- Support and services: Compare support tiers, response times, and solution engineering resources. Dedicated help during migrations and scaling events reduces risk.

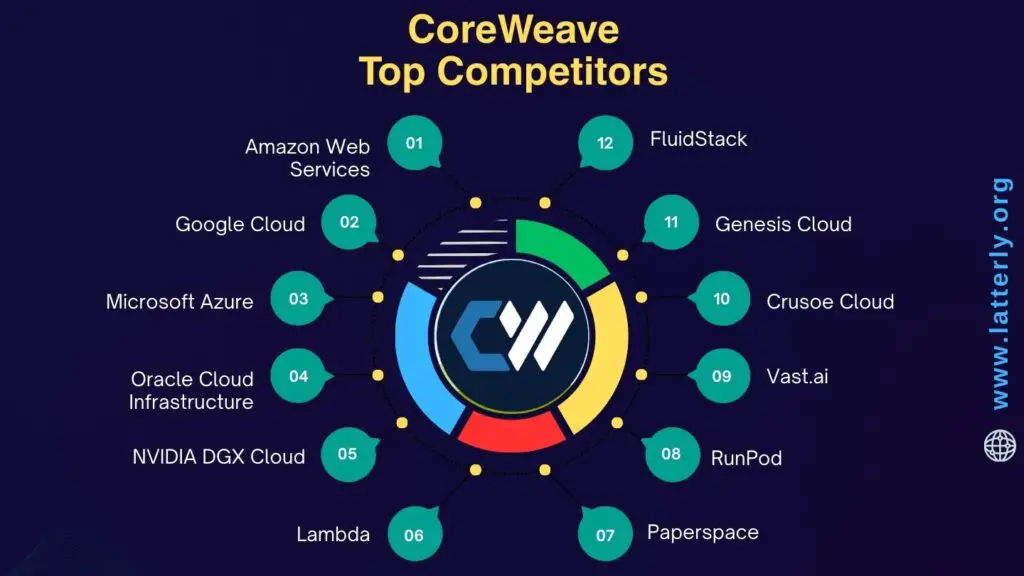

Top 12 CoreWeave Competitors and Alternatives

Amazon Web Services

Among hyperscalers, Amazon Web Services offers one of the broadest GPU compute portfolios for AI, HPC, and rendering. The platform combines mature networking, storage, and orchestration services with global availability zones. Enterprises value its scale, partner ecosystem, and reliability.

- Extensive GPU instance families such as P5, P4d, and G5 serve training, inference, and graphics workloads across regions with deep capacity and mature SLAs.

- High performance networking through Elastic Fabric Adapter and placement groups enables multi-node training and low latency cluster communication.

- Integrated data services like FSx for Lustre, EFS, S3, and AWS Batch streamline pipelines from data ingest to large scale distributed training.

- Managed orchestration with EKS, ECS, and SageMaker provides options from Kubernetes control to fully managed ML workflows.

- Spot Instances, Savings Plans, and Reserved Instances offer cost management levers that can rival specialized providers for sustained usage.

- Security and compliance certifications, identity controls, and VPC networking meet stringent enterprise and regulated industry requirements.

- Customers consider AWS an alternative to CoreWeave when they need global reach, a full stack of cloud services, and predictable enterprise support.

- Deep integrations with MLOps tools, CI or CD pipelines, and a vast marketplace reduce time to production for AI and 3D workloads.

Google Cloud

Google Cloud is widely recognized for its AI focus and research driven features. The platform supports modern NVIDIA GPUs and proprietary TPUs that appeal to teams pushing model scale. Strong data and analytics services complement machine learning operations.

- GPU families like A3, A2, and G2 target training with H100 or A100 and inference with L4, giving choice across performance and price tiers.

- TPU VM offerings such as v5e and v5p differentiate Google Cloud with tightly integrated accelerators for large scale training and serving.

- Vertex AI unifies data labeling, training, tuning, pipelines, and deployment, reducing operational overhead for ML teams.

- GKE delivers production grade Kubernetes with GPU scheduling, node auto provisioning, and ecosystem add ons for MLOps.

- Data capabilities through BigQuery, Dataproc, and Filestore help bridge analytics, data engineering, and model training workflows.

- Global private backbone networking and regional capacity support demanding AI workloads that need consistency and low jitter.

- Organizations consider it a CoreWeave alternative for end to end AI tooling, TPU access, and integrated data platforms.

- Competitive committed use discounts and sustained use pricing can improve economics for long running training clusters.

Microsoft Azure

Microsoft Azure brings enterprise depth, Windows and hybrid strengths, and a growing portfolio of GPU powered instances. Its investments in high speed networking and HPC make it attractive for large scale AI training. Tight integration with developer and productivity stacks adds value.

- ND and NC series instances deliver A100 and H100 options for training, while NV families target visualization, rendering, and inference.

- InfiniBand enabled clusters and Azure HPC Cache support multi node training, scalable storage throughput, and low latency communication.

- Azure Machine Learning orchestrates experiments, distributed training, model registry, and deployment with enterprise governance.

- AKS provides GPU aware Kubernetes, node pools, autoscaling, and policy controls for production MLOps.

- Hybrid capabilities with Azure Arc and on premises integration appeal to organizations with compliance or data gravity needs.

- Security, identity, and compliance certifications align with global regulatory frameworks, easing adoption in regulated industries.

- Teams consider Azure an alternative to CoreWeave when they require enterprise agreements, Microsoft ecosystem alignment, and broad regional availability.

- Cost controls through Azure Reservations and Savings Plans help optimize long running GPU fleets for training and serving.

Oracle Cloud Infrastructure

Oracle Cloud Infrastructure focuses on high performance networking and cost efficient I or O, which benefits AI and HPC clusters. Its bare metal and RDMA options are designed for low latency training at scale. Partnerships with NVIDIA further extend its GPU offerings.

- Bare metal GPU shapes and cluster networking provide predictable performance for distributed training and simulation workloads.

- Competitive egress and storage pricing can reduce total cost of ownership for data intensive pipelines.

- Integration with NVIDIA platforms, including DGX Cloud availability, offers turnkey access to optimized AI stacks.

- Block volumes, Object Storage, and File Storage deliver multiple tiers to match checkpointing, dataset staging, and archive needs.

- Resource Manager and Terraform friendly tooling simplify reproducible infrastructure for MLOps teams.

- Global regions and expanding GPU capacity make OCI more accessible to enterprises adopting AI at scale.

- As a CoreWeave alternative, OCI suits customers seeking cost performance balance, RDMA networking, and enterprise support.

- Flexible tenancy and security controls help align cloud deployments with strict governance requirements.

NVIDIA DGX Cloud

NVIDIA DGX Cloud offers a managed, full stack environment for training and fine tuning frontier scale models. Provided through partners like OCI and Azure, it emphasizes performance and software integration. Teams use it to accelerate time to accuracy with minimal infrastructure work.

- DGX H100 class nodes, high speed networking, and tuned software stacks deliver predictable, high throughput training performance.

- NVIDIA AI Enterprise, NGC containers, and CUDA libraries come prevalidated, reducing integration risk and setup time.

- Support for frameworks like PyTorch, TensorFlow, and distributed training libraries helps teams scale quickly.

- Managed clusters and enterprise support simplify operations, making it appealing to lean ML platforms teams.

- Integration with NeMo, Triton Inference Server, and NIM microservices speeds up model lifecycle from training to deployment.

- Organizations evaluate DGX Cloud as a CoreWeave alternative when they want a turnkey NVIDIA optimized environment.

- Predictable performance and dedicated capacity address concerns about noisy neighbors and spot interruptions.

- Close coupling of hardware, drivers, and libraries reduces performance regression risk during upgrades.

Lambda

Lambda is a specialist GPU cloud built by Lambda Labs, known for serving AI startups and research teams. It balances on demand instances with reserved capacity for stable training schedules. The company also offers on premises hardware, creating a hybrid path.

- GPU instances across A100, H100, and workstation class cards support training, fine tuning, and high throughput inference.

- Prebuilt deep learning images and drivers reduce setup, letting teams focus on experiments instead of environment plumbing.

- Reserved and dedicated offerings provide consistent performance for multi week training runs and compliance needs.

- Managed Kubernetes and cluster creation tools help scale workloads without heavy platform engineering overhead.

- Competitive pricing and clear quotas attract startups seeking predictable cost for iterative research.

- 24 or 7 support and a focused roadmap align with AI use cases rather than generalized cloud services.

- Customers consider Lambda as a CoreWeave alternative for simplicity, cost transparency, and AI specific optimizations.

- Optional on premises systems enable hybrid workflows and data locality for sensitive datasets.

Paperspace

Paperspace, now part of DigitalOcean, emphasizes ease of use for developers and small teams exploring GPUs. From notebooks to production VMs, it lowers the barrier to entry. The platform is popular for prototyping, education, and light production inference.

- Simple console and API make spinning up GPU VMs and notebooks fast for newcomers and experienced users alike.

- Gradient provides managed Jupyter environments, experiment tracking, and pipelines suited to rapid iteration.

- A range of GPUs, including consumer and data center cards, covers budgets from hobby projects to startup workloads.

- Team collaboration features and shared workspaces streamline classroom, hackathon, and small team workflows.

- Integration with object storage and model deployment tools supports end to end ML lifecycles.

- As a CoreWeave alternative, Paperspace appeals to teams prioritizing simplicity and quick starts over deep networking features.

- Predictable pricing and credits help control costs during experimentation and early product stages.

- DigitalOcean stewardship adds a broader developer ecosystem and marketplace integrations.

RunPod

RunPod stands out with serverless style GPU endpoints and persistent pods for ML training and inference. Its template based workflows make deployments fast and reproducible. Teams value the mix of low friction setup and cost control.

- Serverless inference endpoints auto scale with demand, reducing idle GPU spend and operational overhead.

- Persistent pods support fine tuning and training with customizable images and storage mounting options.

- Community templates and curated stacks accelerate adoption of popular frameworks and serving toolchains.

- Usage based pricing and marketplace supply give budget flexibility for bursty workloads and experiments.

- Kubernetes underpinnings enable portable workflows and easier migration as needs evolve.

- RunPod is considered a CoreWeave alternative when fast deployment and serverless inference are top priorities.

- Observability features and simple dashboards help monitor performance, cost, and reliability at a glance.

- API centric design integrates smoothly with CI or CD pipelines and automated retraining jobs.

Vast.ai

As an independent marketplace, Vast.ai connects buyers with distributed GPU supply across many providers. This model can unlock attractive prices and hardware variety. Advanced users leverage it to assemble cost effective clusters.

- Marketplace dynamics uncover deals on A100, H100, and consumer GPUs, letting teams match budget and performance.

- Flexible filters, benchmarking, and verified listings help evaluate hosts for stability and throughput.

- Bring your own image workflows and SSH access give control for custom stacks and niche frameworks.

- Spot like economics suit elastic training and inference where jobs can be scheduled around availability.

- Geographic diversity enables data locality and latency optimization for specific regions and markets.

- It is a CoreWeave alternative for teams that prioritize price discovery and hardware choice over managed experiences.

- Users should plan for additional operational tooling to harden reliability, security, and observability.

- Community and documentation provide guidance for orchestration, checkpointing, and job resumption strategies.

Crusoe Cloud

Crusoe Cloud focuses on sustainable, low cost compute by colocating data centers near stranded energy and optimizing power usage. Its GPU catalog targets AI training and HPC. Organizations adopt it to align performance goals with sustainability commitments.

- GPU instances featuring A100 and H100 aim for strong price performance by leveraging efficient power and cooling.

- High speed networking and HPC oriented storage support multi node training, simulation, and scientific workloads.

- Kubernetes and Slurm options accommodate both cloud native ML teams and traditional HPC users.

- Environmental positioning resonates with companies that report on carbon intensity and energy sourcing.

- Transparent pricing and capacity planning assist long running training schedules and predictable research cycles.

- As a CoreWeave alternative, Crusoe appeals to cost conscious teams with sustainability goals and cluster scale needs.

- Private networking and security features support enterprise isolation and data protection requirements.

- Professional services can help migrate pipelines and optimize distributed training performance.

Genesis Cloud

Genesis Cloud provides European based GPU infrastructure with a focus on compliance and data residency. It is well suited for customers that need GDPR alignment. The service balances performance, pricing, and support for AI workloads.

- GPU options include data center and workstation class cards for training, tuning, and high throughput inference.

- European regions assist with sovereignty, privacy policies, and localization requirements.

- S3 compatible object storage and snapshot features support datasets, checkpoints, and reproducible environments.

- API and Terraform support enable infrastructure as code and automated scaling strategies.

- Predictable pricing and reserved capacity can stabilize budgets for multi month projects.

- Customers view Genesis Cloud as a CoreWeave alternative when EU residency, compliance, and regional support are critical.

- Support for popular ML images and drivers shortens setup time and reduces dependency conflicts.

- Network peering and private connectivity options improve security posture for sensitive data pipelines.

FluidStack

FluidStack offers high performance GPUs with an emphasis on competitive pricing and dedicated capacity. The company serves AI training, rendering, and batch compute users. Its simplicity appeals to teams that want fast provisioning without heavy vendor lock in.

- Access to H100, A100, and L40S class GPUs provides flexibility across training and inference workloads.

- Dedicated instances minimize noisy neighbor risk, improving throughput for long training runs and rendering queues.

- Straightforward control panel and APIs enable rapid spin up, teardown, and scaling across projects.

- Snapshotting and image management accelerate environment cloning, team onboarding, and reproducibility.

- Support and capacity planning services help align supply with release schedules and research milestones.

- It is considered an alternative to CoreWeave for teams seeking cost effective, focused GPU infrastructure.

- Optional private networking and firewalling enhance security for production MLOps pipelines.

- Transparent, negotiable pricing for committed usage can deliver favorable economics over time.

Top 3 Best Alternatives to CoreWeave

AWS

AWS stands out for unmatched global scale, mature AI services, and an enormous partner ecosystem. It offers consistent enterprise-grade security, networking, and compliance across many regions.

- Broad GPU catalog including NVIDIA A100 and H100 in select regions, plus rich storage and networking options for high throughput.

- End-to-end ML tooling with Amazon SageMaker, EKS, Batch, and integrated data services to accelerate production.

- Diverse pricing levers such as On-Demand, Savings Plans, and Spot Instances to manage cost at scale.

Best for enterprises and teams that need global availability, strong governance, and tight integration with a full cloud stack.

Google Cloud

Google Cloud stands out with an AI-first platform, streamlined ML workflows in Vertex AI, and exclusive access to Google TPUs. Its high-performance networking and storage help drive fast training and inference.

- Access to NVIDIA H100 powered A3 instances and multiple TPU generations for large-scale training efficiency.

- Seamless data-to-AI pipelines with BigQuery, Dataflow, and Vertex AI for rapid experimentation to production.

- Transparent pricing with per-second billing and committed use discounts for predictable planning.

Best for research groups and product teams prioritizing cutting-edge AI infrastructure, robust MLOps, and strong data integration.

Microsoft Azure

Azure stands out for enterprise security, hybrid flexibility, and deep partnerships across the AI ecosystem. Azure Machine Learning and strong DevOps integrations support regulated, production-grade deployments.

- ND-series instances with the latest NVIDIA GPUs, including H100 availability in select regions for state-of-the-art performance.

- Comprehensive governance with Azure AD, Policy, and confidential computing options to meet compliance needs.

- Hybrid and on-prem integration via Azure Arc and ExpressRoute to support data gravity and sovereignty.

Best for organizations standardized on Microsoft, regulated industries, and teams needing hybrid cloud with enterprise governance.

Final Thoughts

The GPU cloud market is vibrant, and there are many capable CoreWeave alternatives. Hyperscalers offer unparalleled breadth, compliance, and global footprint, while specialized providers can compete on price, availability, or niche workflows. This variety gives teams confidence that they can find the right fit for performance and budget.

The best choice depends on your models, timelines, data location, governance needs, and tooling preferences. Define success metrics, run small pilots, and benchmark across two or three providers before committing. With clear requirements and disciplined testing, you can select a platform that delivers reliable speed, predictable costs, and long-term flexibility.